This post was most recently updated on January 18th, 2023

Human or bot? You be the judge. The latest version of Google’s reCAPTCHA, which attempts to differentiate between humans and bots, is raising some eyebrows. Some say it’s more accurate than previous versions, while others claim that it’s just creating more work for publishers. So what’s the verdict? We’re here to break it down for you.

Designed as a security measure in the late 90s, CAPTCHA is a security measure built to distinguish bots from human users with visual or audio challenges. Their main purpose is to stop general bots from signing up for accounts & spamming in the comment section. ‘reCAPTCHA’ on the other hand which is used by millions of sites online was released by Google in 2007 as their CAPTCHA system. reCAPTCHA is free up to 1 million API calls a month. If your website generates more than a million calls every month, then reCAPTCHA Enterprise is the way to go with $1 for every 1000 calls up to 10 million calls a month.

You must have come across reCAPTCHA v2 on some sites when they ask you to identify or detect crosswalks, etc. This was launched in 2014 and is used by the majority of websites. If there’s suspicious invalid user activity, reCAPTCHA v2 delivers a puzzle (image recognition task) the visitor needs to solve to separate bots from humans. Some sites have the base captcha plugin on their site which allows users to just check the “I’m not a robot” box to pass as legitimate users.

The task you get depends on Google being sure you are not a bot. reCAPTCHA v2 relies on Google cookies mainly since it’s an undercover risk analysis system. Chrome users will most likely have to check the ‘not a robot’ box while browsing while Firefox users with disabled third-party cookies get hard to pass image recognition puzzles. Also, Safari is something we’ve not even taken into account here but they come with their own bot blocking services.

What’s bad about reCAPTCHA v2 is that it deteriorates the whole user experience lowering conversion rates when the puzzles are more time-consuming than usual. Additionally, nefarious criminals can now easily bypass any form of reCAPTCHA challenge which makes it less effective for publishers to depend on as a fraud protection solution. With the help of AI & machine learning, botnets are able to solve these puzzles so they come already trained to beat reCAPTCHA.

Just like click farms, there are CAPTCHA farms where fraudsters outsource reCAPTCHA challenges to human workers in tier-3 countries. So what does it take to bypass a reCAPTCHA v2? You can easily pass these puzzles by sending a callback request with the response token.

Since JavaScript is not actually necessary here, cybercriminals design bots that use basic HTTP request libraries rather than advanced browsers. Additionally, CAPTCHA farms are cheaper as they pay these workers around $1-5 to solve thousand plus puzzles.

reCAPTCHA v3 is the leveled-up version of v2 built to catch sophisticated invalid traffic (SIVT) with a better user experience. This is more transparent & works in the background as there are no puzzles to solve with v3. Its mainly involved in user behavioral detection to conclude if the user is legitimate or not. Whatever request the bot or human user makes on the website, reCAPTCHA v3 returns a 0 or 1 score with 0 meaning bot & 1 meaning you’re human.

Publishers can always tweak the settings to help improve the reCAPTCHA v3 scoring system for more accuracy in detecting bot or human user behavior. But it does come with a few flaws. With v3, publishers need to determine what actions to take depending on the score which becomes challenging at times since it’s not automated. Even the most experienced website admins get stuck when it comes to getting the configuration right.

1. Poor user experience in v2. Real users find these puzzles time-consuming & drop off.

2. Sophisticated bots can easily bypass v3.

3. Can’t monitor false positives and negatives.

4. Assigning the right score threshold in v3 is an arduous task.

5. Captcha farms & machine learning neural network algorithms lets fraudsters solve any CAPTCHA challenge.

In a nutshell, neither of the above solutions is good enough for ad fraud protection & bot blocking.

We cannot use reCAPTCHA v2 and v3 on every single page to verify users since that will increase bounce rates. This will also drastically decrease the publisher’s ad revenue. This is when a reliable invalid traffic management solution like Traffic Cop can actually solve the bot problem for publishers.

Here’s why:

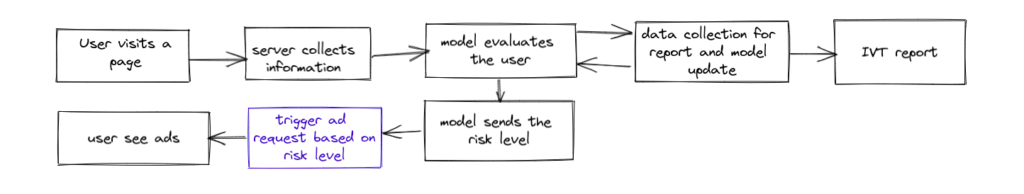

With the Traffic Cop dashboard, you get detailed reports and analysis on Traffic type, bot traffic by country, device, IP address and much more. Once bot traffic is detected they are further classified into harmless, suspicious, and critical invalid traffic. After that, bad bot behavior is blocked for good and you’ll never have to worry about ad revenue clawbacks again.

Traffic Cop does use CAPTCHAs as feedback loops so that if a human user gets blocked they can still carry on their navigation. If the human or bot can’t even solve the basic CAPTCHA puzzle, ads on that site will be hidden to the user or bot to avoid abusive clicks on ads, abusive ad refresh, etc. Our AdOPs teams have developed advanced algorithms to make sure that CAPTCHAs are solved by legitimate users and not by CAPTCHA farms or sophisticated bots. We’ve invalidated millions of forged CAPTCHA responses till now.

When it comes to detecting sophisticated bots that can drastically decrease your ad revenue in the long term, we’ve come up with intricate bot detection via IP address to make sure even the most sophisticated bots cannot bypass Traffic Cop. Publishers like clark.com, Blurb Media INC had experienced massive revenue clawbacks due to bots and after implementing Traffic Cop their clawbacks reduced from 86% to 1% which has been a lifesaver for them. Read more here.

With over seven years at the forefront of programmatic advertising, Aleesha is a renowned Ad-Tech expert, blending innovative strategies with cutting-edge technology. Her insights have reshaped programmatic advertising, leading to groundbreaking campaigns and 10X ROI increases for publishers and global brands. She believes in setting new standards in dynamic ad targeting and optimization.

Paid to Publishers

Ad Requests Monthly

Happy Publishers

10X your ad revenue with our award-winning solutions.